Cost-Effective Monitoring: Benefits of Simple Extensometers for Slope Movement Detection

June 17, 2024 |

Extensometers are devices that measure deformation including, capturing extension, compression, or shear deformation. They come in various shapes, sizes, capacities, temperature ratings, and can either contact the feature directly or take optical measurements without contact.

Proprietary extensometers or downhole inclinometers for detecting slope movements are highly accurate and commonly used at many sites, however in an emergency they can take a long time to source and, if drilling is required, may not be safe to install.

In many operating mines, "low tech" extensometers serve in an emergency as a temporary, rapid, practical and economical alternative while more advanced instrumentation are sourced and installed.

Simple and Accessible Tool

Simple extensometers can be set up without machinery using everyday materials immediately available at most work sites. Readings can be taken visually and remotely if a line of site is available from a safe location. These devices are easy to install, simple to read, and sufficient for determining if an area is stable or experiencing movement.

Examples of simple extensometers are shown below:

Reliable and Efficient Monitoring

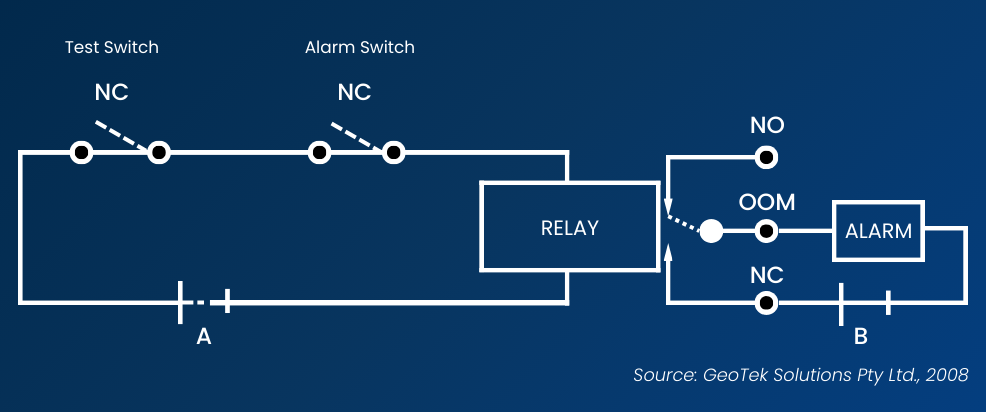

A practical example involves placing the extensometer perpendicular to the anticipated direction of movement. If movement occurs, a relay (essentially a magnet) is pulled away from the alarm system, completing the alarm circuit and triggering the alarm. This system is powered by one or two 12V vehicle batteries. Consider integrating simple extensometers into your monitoring systems for a reliable and efficient method to detect ground movements.

A simple alarm system can be added as shown below.